Instructions to use anakin87/LFM2-2.6B-mr-tictactoe with libraries, inference providers, notebooks, and local apps. Follow these links to get started.

- Libraries

- Transformers

How to use anakin87/LFM2-2.6B-mr-tictactoe with Transformers:

# Use a pipeline as a high-level helper from transformers import pipeline pipe = pipeline("text-generation", model="anakin87/LFM2-2.6B-mr-tictactoe") messages = [ {"role": "user", "content": "Who are you?"}, ] pipe(messages)# Load model directly from transformers import AutoTokenizer, AutoModelForCausalLM tokenizer = AutoTokenizer.from_pretrained("anakin87/LFM2-2.6B-mr-tictactoe") model = AutoModelForCausalLM.from_pretrained("anakin87/LFM2-2.6B-mr-tictactoe") messages = [ {"role": "user", "content": "Who are you?"}, ] inputs = tokenizer.apply_chat_template( messages, add_generation_prompt=True, tokenize=True, return_dict=True, return_tensors="pt", ).to(model.device) outputs = model.generate(**inputs, max_new_tokens=40) print(tokenizer.decode(outputs[0][inputs["input_ids"].shape[-1]:])) - Notebooks

- Google Colab

- Kaggle

- Local Apps

- vLLM

How to use anakin87/LFM2-2.6B-mr-tictactoe with vLLM:

Install from pip and serve model

# Install vLLM from pip: pip install vllm # Start the vLLM server: vllm serve "anakin87/LFM2-2.6B-mr-tictactoe" # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:8000/v1/chat/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "anakin87/LFM2-2.6B-mr-tictactoe", "messages": [ { "role": "user", "content": "What is the capital of France?" } ] }'Use Docker

docker model run hf.co/anakin87/LFM2-2.6B-mr-tictactoe

- SGLang

How to use anakin87/LFM2-2.6B-mr-tictactoe with SGLang:

Install from pip and serve model

# Install SGLang from pip: pip install sglang # Start the SGLang server: python3 -m sglang.launch_server \ --model-path "anakin87/LFM2-2.6B-mr-tictactoe" \ --host 0.0.0.0 \ --port 30000 # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:30000/v1/chat/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "anakin87/LFM2-2.6B-mr-tictactoe", "messages": [ { "role": "user", "content": "What is the capital of France?" } ] }'Use Docker images

docker run --gpus all \ --shm-size 32g \ -p 30000:30000 \ -v ~/.cache/huggingface:/root/.cache/huggingface \ --env "HF_TOKEN=<secret>" \ --ipc=host \ lmsysorg/sglang:latest \ python3 -m sglang.launch_server \ --model-path "anakin87/LFM2-2.6B-mr-tictactoe" \ --host 0.0.0.0 \ --port 30000 # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:30000/v1/chat/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "anakin87/LFM2-2.6B-mr-tictactoe", "messages": [ { "role": "user", "content": "What is the capital of France?" } ] }' - Docker Model Runner

How to use anakin87/LFM2-2.6B-mr-tictactoe with Docker Model Runner:

docker model run hf.co/anakin87/LFM2-2.6B-mr-tictactoe

LFM2-2.6B-mr-tictactoe

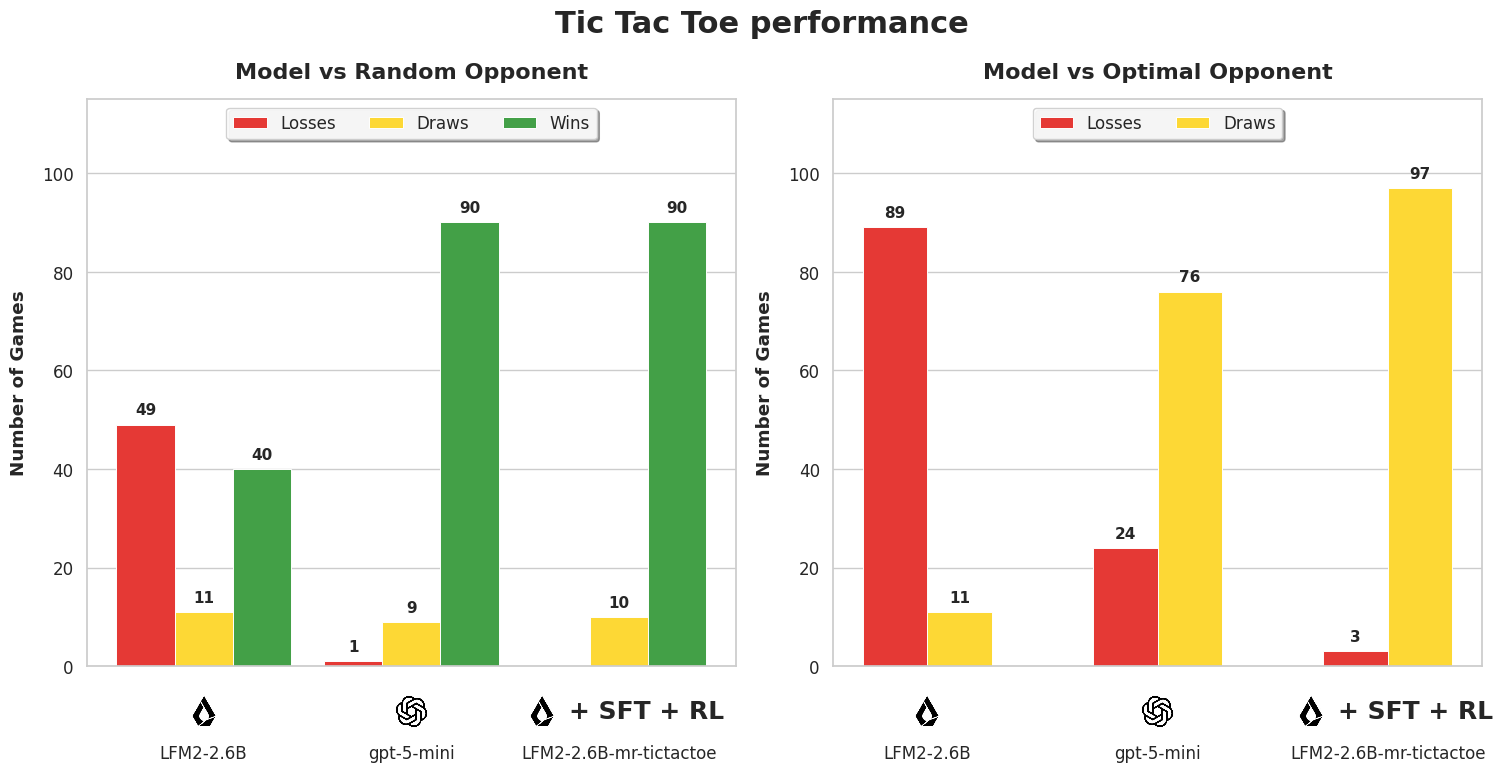

A 2.6B parameter model that plays near-perfect Tic Tac Toe, outperforming openai/gpt-5-mini on this task.

Built from LiquidAI/LFM2-2.6B through a full training pipeline: Supervised Fine-Tuning on synthetic data, followed by two rounds of Reinforcement Learning (CISPO) in a verifiable Tic Tac Toe environment.

This model was developed as part of 🎓 LLM RL Environments Lil Course, a hands-on course on building RL environments for Language Models, where models learn from rewards, not examples. It walks through the full process of turning a small open model into a specialist that outperforms a large proprietary one on a specific task (Tic Tac Toe).

🤗🕹️ Play against Mr. Tic Tac Toe

Training pipeline

| Step | Model | Method |

|---|---|---|

| 1. SFT warm-up | anakin87/LFM2-2.6B-ttt-sft | SFT on 174 synthetic games from gpt-5-mini |

| 2. RL round 1 | anakin87/LFM2-2.6B-ttt-rl + merged | CISPO, 600 steps, opponents at 20-70% random |

| 3. RL round 2 | anakin87/LFM2-2.6B-ttt-rl-2 + this model | CISPO, 400 steps, opponents at 0-25% random, temp 1.25 |

Evaluation

100 games per setting. The model plays as X (first mover) against a Minimax-based opponent.

| Model vs random opponent | % Wins | % Draws | % Losses | % Follows format | % Games w invalid moves |

|---|---|---|---|---|---|

| openai/gpt-5-mini | 90 | 9 | 1 | 100 | 0 |

| LiquidAI/LFM2-2.6B | 40 | 11 | 49 | 27.8 | 40 |

| anakin87/LFM2-2.6B-mr-tictactoe | 90 | 10 | 0 | 100 | 0 |

| Model vs optimal opponent | % Wins | % Draws | % Losses | % Follows format | % Games w invalid moves |

| openai/gpt-5-mini | 0 | 76 | 24 | 100 | 0 |

| LiquidAI/LFM2-2.6B | 0 | 11 | 89 | 24.7 | 43 |

| anakin87/LFM2-2.6B-mr-tictactoe | 0 | 97 | 3 | 99.8 | 0 |

Training details

- Algorithm: CISPO (two rounds), using Verifiers RLTrainer

- Environment: anakin87/tictactoe (Verifiers environment)

- LoRA rank: 8

- Hardware: 2x NVIDIA RTX Pro 6000 (round 1), 2x NVIDIA H200 (round 2)

- Training time: ~8 hours per round

- W&B project: LFM2-2.6B Tic Tac Toe

- Downloads last month

- 30

Model tree for anakin87/LFM2-2.6B-mr-tictactoe

Base model

LiquidAI/LFM2-2.6B